Open any AI assistant in Europe and you are probably talking to an American company. OpenAI, Google, Anthropic, Meta, Microsoft: these names own the default.

That is not inevitable. It is the result of earlier investment and faster distribution. EU AI infrastructure exists, but it rarely gets chosen first.

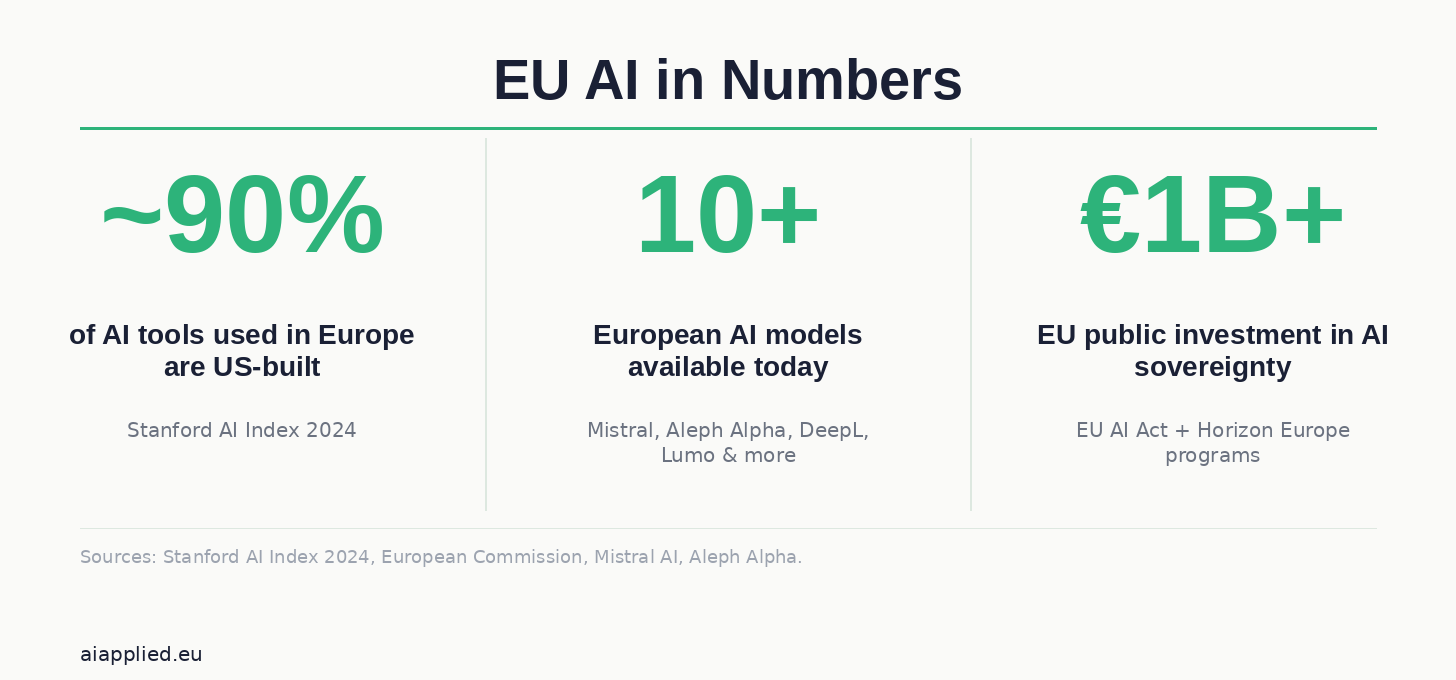

European AI exists, it is capable, and it almost never gets chosen first.

That gap has real consequences for privacy, for sovereignty, and for who gets to shape AI for hundreds of millions of people.

The Quiet US Default

Most Europeans using AI are, by default, using American AI.

ChatGPT, Gemini, Claude, Copilot: these tools are pre-loaded, integrated, already there. Every one of them is outside EU AI jurisdiction, processing European data under US law.

The default is never neutral; it shapes what data leaves Europe, who trains on it, and whose rules apply.

Users rarely choose US AI deliberately. They choose what is already in front of them.

Privacy: What Happens to Your Data

Every prompt sent to a US AI system crosses jurisdictions.

GDPR sets strict rules for European data. US surveillance law, specifically the CLOUD Act and FISA Section 702, gives American authorities potential access to data held by US companies.

That tension with GDPR has never been fully resolved, and AI makes it sharper every year.

Model training is another open question. Does your input help train the next version? Policies vary, and almost no one reads them.

The Sovereignty Argument

Sovereignty is about more than data. It is about who sets the standards.

A continent that depends entirely on foreign AI infrastructure has no real say in how that AI develops.

What languages get prioritised? What content policies apply? What safety trade-offs get made? Those decisions happen in San Francisco, not Brussels, and they compound over time.

There is also dependency risk. Pricing changes, export restrictions, or geopolitical shifts can alter access fast.

AI Tools Being Built in Europe

AI built in Europe is not a future project. It is here, it is running, and it is capable.

Aleph Alpha (Germany) built Luminous, now rebranded PhariaAI, focused on explainability and enterprise use in regulated industries.

Mistral AI (France) released Mixtral, an open-weight model that competes directly with US frontier models on standard benchmarks.

EuroLLM is a multilingual project covering all 24 official EU languages, built for Europe’s language diversity from the start.

BLOOM is an open-access multilingual large language model from a global research collaboration; one of the first credible open alternatives to GPT-3.

Black Forest Labs (Germany) built Flux, an image generation model that rivals Midjourney and Stable Diffusion.

DeepL is already the default for millions of European professionals who want high-quality translation. It is German-built and GDPR-compliant.

Lumo by Proton is a privacy-first AI assistant from the company behind ProtonMail. It uses end-to-end encryption and is built around GDPR alignment, not data harvesting.

Celonis (Germany) leads in process mining AI: enterprise software that maps how organisations actually operate and surfaces inefficiencies.

LLMs4EU is an EU-funded initiative building open, multilingual models shaped by European values and regulatory expectations.

The European Open Source AI Index tracks this growing ecosystem and is worth bookmarking.

The landscape is broader and more capable than most people assume; the challenge is that it is not where people look first.

Why These Tools Stay in the Margins

Accessibility is the first barrier. US tools have larger developer ecosystems, better marketing, and wider integrations.

A European AI company that cannot get into the default app store or browser extension loses the race before it starts.

This is partly a distribution problem and partly a brand problem. Most people have simply never heard of Mistral or Aleph Alpha.

Data guarantees are the second issue. Users need to know, simply and clearly, what happens to their information. Most alternatives have not yet communicated this in plain language.

Third: public sector adoption. Governments and institutions should be anchor customers for European alternatives, but procurement is slow and risk-averse.

A single large government contract can do more for an EU AI startup’s visibility and credibility than years of marketing.

Fourth: open source support. Open-weight models like Mixtral can be self-hosted, audited, and modified, which is a genuine privacy advantage.

That advantage requires technical capacity most teams do not have, so it needs to be paired with better tooling and real infrastructure support.

The EU AI Act: What It Does and What It Does Not

The EU AI Act is the world’s first comprehensive AI regulation. It entered into force in August 2024 and applies in phases, with most obligations fully active by August 2026.

The Act classifies AI systems by risk level, from outright bans to transparency requirements, with the most stringent rules reserved for high-risk uses.

Prohibited entirely, since February 2025: AI used for social scoring, subliminal manipulation, real-time biometric surveillance in public spaces, and systems that exploit psychological vulnerabilities.

High-risk categories, subject to the strictest compliance rules from August 2026, include AI used in hiring, credit scoring, law enforcement, border control, healthcare, education, and critical infrastructure.

Providers of high-risk systems must document their models, implement ongoing risk management, allow human oversight, and register with EU authorities before going to market. Fines for violations reach €35 million or 7% of global turnover.

For general-purpose AI, including models like GPT-4 and Claude, obligations around transparency and systemic risk assessment have applied since August 2025.

This matters for sovereignty because the Act applies to any AI sold or used in the EU, regardless of where it is built. US providers must comply or exit the European market.

That is meaningful leverage. But it is not the same as having alternatives. Compliance does not make a foreign AI system European; it makes it less harmful. The gap between “less harmful” and “genuinely independent” is where the EU AI Act runs out of answers.

What Needs to Change

Regulation alone will not shift the default.

The EU AI Act matters. GDPR matters. But rules about what US AI cannot do do not automatically make alternatives more visible or easier to use.

The real intervention is investment and placement: fund the alternatives, then make sure they appear where people actually look.

Public institutions should pilot home-grown models first. Schools, hospitals, and government agencies are exactly the right test beds.

Citizens deserve a simple, honest answer to: “If I use this AI, what happens to my data?” Right now that answer is buried in legal text. It should be the first thing on the page.

Open source investment also matters. Better documentation, hosted demos, and integration libraries lower the barrier for organisations that want to self-host but lack the capacity today.

The US AI ecosystem grew because developers found it easy to build on. Europe needs to replicate that ease, not just the models.

US AI dominance is not a law of nature; it is the result of earlier investment, faster distribution, and better defaults.

Europe has the regulation, the talent, and increasingly the models. What is missing is the commitment to make these alternatives the obvious first choice, not the principled workaround. That is a political and industrial decision, and it is entirely reachable.

FAQ about European AI

What is European AI and how is it different from US AI?

The term covers AI systems built by European companies or research institutions. These tools are designed with GDPR compliance and data protection as genuine priorities, rather than afterthoughts. Companies like Mistral AI, Aleph Alpha, and DeepL build capable models with explicitly European data practices.

Is European AI as capable as ChatGPT or other US models?

In many areas, yes. Mistral’s Mixtral is competitive with US frontier models on standard benchmarks. DeepL outperforms Google Translate on many European language pairs. Aleph Alpha’s PhariaAI is purpose-built for regulated industries. The capability gap has closed significantly over the past two years.

Why does AI sovereignty matter for European citizens?

The tools people use daily shape what data leaves Europe, who can access it, and what values get embedded in AI decisions. When core digital infrastructure sits outside European jurisdiction, European law has limited practical reach. Keeping EU AI infrastructure closer to home means stronger data protection and more democratic control.

What does the EU AI Act mean for people using AI in Europe?

The EU AI Act sets rules for how AI systems can be built and used across the EU. Since February 2025, certain AI practices are banned outright. From August 2026, high-risk AI systems, those used in hiring, credit, healthcare, and law enforcement, must meet strict transparency and safety requirements. For everyday users, the practical effect is stronger rights to know when AI is making decisions that affect them, and clearer accountability when something goes wrong.

Which EU AI tools can someone use right now?

Several are available today. Lumo by Proton is a privacy-first AI assistant with end-to-end encryption. DeepL is a high-quality translation tool built in Germany. Mistral AI offers open and commercial models via API. Aleph Alpha’s PhariaAI targets enterprise and public sector use.